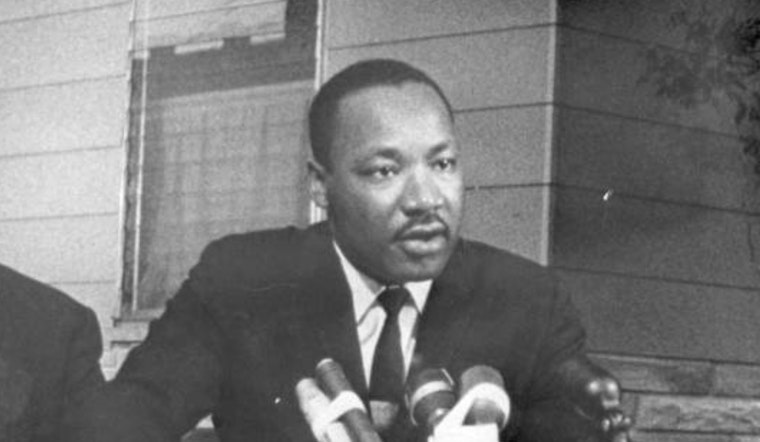

The San Francisco-based AI giant OpenAI has pulled the plug on users creating videos of Martin Luther King Jr. using its viral Sora 2 app, marking one of the most significant policy reversals since the deepfake generator launched less than three weeks ago.

The decision came after Dr. Bernice A. King, the civil rights leader's youngest daughter, reached out to the company on behalf of her father's estate. What prompted the intervention? Videos showing King making monkey noises during his "I Have a Dream" speech, stealing from grocery stores, and engaging in other deeply offensive scenarios had been flooding social media since Sora's September 30 launch, according to The Washington Post.

Statement from OpenAI and King Estate, Inc.

— OpenAI Newsroom (@OpenAINewsroom) October 17, 2025

The Estate of Martin Luther King, Jr., Inc. (King, Inc.) and OpenAI have worked together to address how Dr. Martin Luther King Jr.’s likeness is represented in Sora generations. Some users generated disrespectful depictions of Dr.…

"For me, many of the AI depictions never rose to the level of free speech. They were foolishness," Dr. King wrote on X, per Fortune. She emphasized that her father wasn't an elected official and his image isn't public domain—important legal distinctions in states that allow estates to control a deceased person's likeness for up to 100 years after death.

First thing I see when I open the Sora explore page? Tons of MLK Jr and JFK deepfakes 🤔 pic.twitter.com/34K5E2WjU9

— Ben Goggin (@BenjaminGoggin) October 2, 2025

The Uncomfortable Reality of AI-Generated History

Sora 2 represents a new frontier in AI technology, allowing users to create hyper-realistic 10-second videos with synchronized audio based on simple text prompts. The app rocketed to over 1 million downloads within five days of its release, as noted by CNBC. But that explosive popularity has come with a troubling side effect: the ability to resurrect deceased public figures in scenarios they never experienced—or would have never endorsed.

The technology's "cameo" feature lets users insert themselves or others into AI-generated scenes after recording a brief video of their face and voice. While living people can control whether their likeness appears in others' videos, the dead have no such protections—unless their estates intervene. According to NPR, the app has been used to create deepfakes of everyone from Princess Diana to John F. Kennedy, Malcolm X to Kurt Cobain, all without explicit consent from their estates or families.

A Pattern of "Ask Forgiveness, Not Permission"

OpenAI's handling of the situation follows what Kristelia García, an intellectual property law professor at Georgetown Law, called the company's characteristic "asking forgiveness, not permission" approach, she told NPR. "The AI industry seems to move really quickly, and first-to-market appears to be the currency of the day (certainly over a contemplative, ethics-minded approach)."

In a joint statement with King's estate, OpenAI acknowledged that "while there are strong free speech interests in depicting historical figures, OpenAI believes public figures and their families should ultimately have control over how their likeness is used," according to the company's newsroom account on X. The statement added that authorized representatives or estate owners can now request that their likenesses not be used in Sora cameos, as reported by TechCrunch.

But the opt-out model itself has drawn criticism. As Fast Company notes, requiring individuals and estates to actively police AI platforms for misuse of their loved ones' images places an enormous burden on families—particularly those without the resources or visibility to mount effective challenges.

When Celebrity Families Speak Out

King isn't the only family member to push back against Sora's resurrection of deceased loved ones. Zelda Williams, daughter of the late actor Robin Williams, posted on Instagram asking people to "please, just stop sending me AI videos of dad," calling the content "maddening," according to TechCrunch. Ilyasah Shabazz, daughter of Malcolm X, told The Washington Post it was "deeply disrespectful" to see her father depicted in crude AI videos—particularly painful given she witnessed his assassination as a two-year-old.

Kelly Carlin-McCall, daughter of comedian George Carlin, described getting daily emails about AI videos using her father's likeness on Bluesky, saying "We are doing our best to combat it, but it's overwhelming, and depressing," per Axios.

Legal Gray Zones and Postmortem Rights

The controversy highlights a complex patchwork of state laws governing postmortem publicity rights. In California—where OpenAI is headquartered at 3180 18th Street in San Francisco's Mission District—heirs and estates own the rights to a public figure's likeness for 70 years after death, as NPR reported. But many states offer limited or no such protections, creating what one legal expert called a "legal lottery" for families depending on where the deceased was based.

Traditional defamation laws typically don't apply to deceased individuals, meaning families have few legal tools beyond right-of-publicity statutes that vary wildly by jurisdiction, according to WinBuzzer. This legal ambiguity has created an environment where AI companies can operate with relative impunity unless high-profile estates intervene.

Copyright Chaos Beyond Deepfakes

The MLK controversy is just one front in OpenAI's broader battle with intellectual property holders. Within hours of Sora's launch, the app was flooded with videos featuring copyrighted characters like SpongeBob SquarePants, Mario, and Pikachu—prompting criticism from major Hollywood studios and talent agencies, as CBS News noted.

The Motion Picture Association has called on OpenAI to take "immediate action" to fix its copyright opt-out system, while major talent agencies including Creative Artists Agency and United Talent Agency have opted their clients out of the platform, with UTA calling it "exploitation, not innovation," according to Fortune.

CEO Sam Altman initially said copyright holders would need to explicitly opt out if they didn't want their IP used in Sora videos—a reversal of typical consent models. He later walked back that position, promising rights holders "more granular control over generation of characters," per CBS News.

The Broader Questions About Digital Dignity

AI ethicist Olivia Gambelin warned IBTimes UK that deepfakes represent "a dangerous rewriting of history," with the potential to distort truth and legacy. Henry Ajder, a deepfake expert quoted by BBC, raised a troubling question: "King's estate rightfully raised this with OpenAI, but many deceased individuals don't have well-known or well-resourced estates to represent them."

That concern gets at the heart of what some see as a two-tiered system of digital dignity—where only the famous and well-connected can protect their legacies, while ordinary people and lesser-known historical figures remain vulnerable to manipulation. According to NBC News, experts worry about the app's potential to flood the internet with historical misinformation and deepfakes that blur the line between fact and fiction.

What Happens Next

OpenAI says it's working to "strengthen guardrails for historical figures" and will allow authorized representatives to request opt-outs. But the company hasn't specified what qualifies as a "historical figure" or how quickly it will respond to such requests. The current approach—pausing MLK depictions while allowing videos of figures like JFK and Stephen Hawking to circulate—suggests policy decisions are being made reactively rather than proactively.

Lawmakers have introduced the No Fakes Act, which would give individuals the right to control their digital likenesses, with a provision allowing heirs to maintain those rights for 70 years after death, as Deadline reported. OpenAI has voiced support for the legislation.

For now, the incident serves as a case study in what happens when powerful AI tools are released with minimal safeguards—and what it takes to force course corrections from tech companies racing to dominate emerging markets.